|

Change of Variables in Multiple Integrals |

|

|

|

Given a function f(x) of a single variable, and some other variable y related to x by some differentiable function x(y), the integral of f(x) can be converted to an integral of f(y) by the simple relation |

|

|

|

|

|

|

|

However, for multiple integrals the correct procedure for changing variables is not quite so simple. The first person to have considered this systematically was apparently Euler, and the topic has continued to be a source of interesting ideas even to the present day. To illustrate, consider the double integral |

|

|

|

|

|

|

|

and suppose we wish to express this in terms of two other variables that are related to x and y by known functions x(u,v) and y(u,v). Thus we can replace x and y in the integrand with the functions of u and v, but we must also replace the product of the differentials dx and dy with some function of u and v times the product of the differentials du and dv. Of course, we have the total differentials |

|

|

|

|

|

|

|

so our first thought might be to simply form the product

of these to give dxdy in terms of du and dv. However, the resulting

expression also includes terms in (du)2 and (dv)2, and

it doesn’t appear (at least superficially) as if it can be reconciled with

the known expressions for converting between Cartesian and polar coordinates

(for example). Many different approaches have been taken to deriving the

correct transformation that generalizes to arbitrary numbers of variables. It’s

often said that we can simply regard the integrand of the double integral as

a “2-form”, and replace the ordinary product dxdy with the wedge product |

|

|

|

|

|

|

|

We can also treat differentials as mutually orthogonal vectors, and the multiplication of differentials as an operation that generalizes the familiar cross product. But, again, the motivation is not entirely clear. |

|

|

|

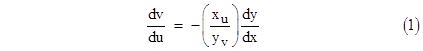

One nice technique that seems to be rarely mentioned but that provides a genuine derivation without resorting to explicit geometrical “area” reasoning is as follows. We begin with the relation |

|

|

|

|

|

|

|

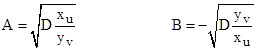

where xu denotes the partial of x with respect to u, and so on. Now, for any given point (x,y) an incremental movement ds in any given direction will correspond to certain increments dx, dy, du, and dv, and if we let dx = Adu and dy = Bdv the above equation can be written in the form |

|

|

|

|

|

|

|

The determinant of the coefficient matrix must vanish, so we must have |

|

|

|

|

|

|

|

Now, by varying the direction of the incremental displacement ds, we can assign any desired ratio to A/B = (dx/dy)(dv/du), and in particular we can choose the direction such that |

|

|

|

|

|

|

|

For this direction we have |

|

|

|

|

|

|

|

and hence |

|

|

|

|

|

Also, the two conditions on A and B imply |

|

|

|

|

|

|

|

It follows that |

|

|

|

|

|

|

|

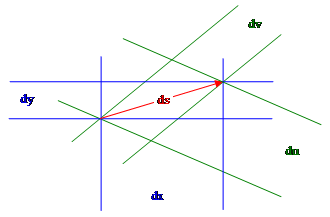

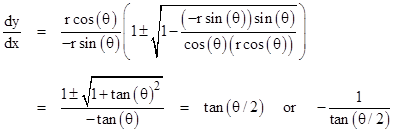

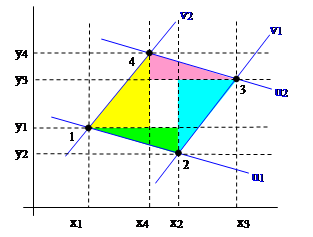

The figure below depicts the typical situation where, beginning at some point (x,y), we move incrementally along the path ds. We could choose any direction for this path, and the resulting ratios of dy/dx and dv/du would differ. By choosing the direction that makes Ayv + Bxu = 0 we are causing the areas enclosed in the regions dxdy and dudv to be equal. Obviously those two regions are not identical, nor even congruent, but they do have equal areas, as shown by the fact that they share the diamond-shaped central region, and the shaded blue regions equal the shaded green regions. |

|

|

|

|

|

|

|

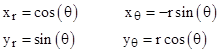

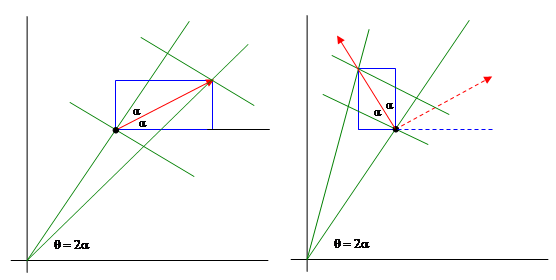

For example, to determine the appropriate multiplier for conversion from Cartesian to polar coordinates, we set u = r and v = θ, and we have |

|

|

|

|

|

|

|

and the determinant is |

|

|

|

|

|

Choosing A and B to make Ar cos(θ) + B cos(θ) = 0 we get A = 1 and B = -r, so we have the result |

|

|

|

|

|

|

|

The minus sign might seem unexpected, but notice in the preceding figure that if the integration limits in terms of x and y are both increasing, then the corresponding integration limits for u (i.e., r) are also increasing but the limits for v (i.e., θ) are decreasing, so the negative sign is correct. On the other hand, if we reverse the integration limits of θ so that they are increasing, then the sign becomes positive. |

|

|

|

This approach also gives the direction of the “diagonal” path ds explicitly. From the equations |

|

|

|

|

|

|

|

combined with equation (1) we have |

|

|

|

|

|

|

|

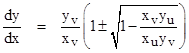

Solving this for dy/dx gives the two possible directions |

|

|

|

|

|

|

|

For the conversion from Cartesian to polar coordinates this gives |

|

|

|

|

|

|

|

This shows that the first “primary direction” of the incremental segment ds, i.e., the direction of the diagonal of parallelograms of equal areas for the rectangular and polar coordinates at a given point, is half the angle between the x axis and the ray from the origin to that point. The second primary direction is perpendicular to the first. This is depicted in the figures below. |

|

|

|

|

|

|

|

These drawings are somewhat distorted due to the large increments that we’ve used to represent the differentials in order to make the components visible. In the limit as the increments becomes infinitesimally small, the enclosed regions (in this example) become rectangles, and it’s easy to see that the relevant regions have equal areas precisely when the diagonals bisect the corners, because this makes the rectangles similar with a common diagonal. |

|

|

|

The approach immediately generalizes to any multiplicity of integration. For example, consider the triple integral |

|

|

|

|

|

|

|

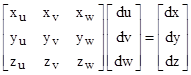

and suppose the variables x,y,z are known functions of another set of variables u,v,w, so we have |

|

|

|

|

|

|

|

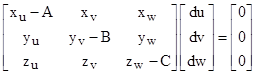

Then we can characterize the direction of any incremental segment ds in terms of quantities A,B,C such that |

|

|

|

|

|

|

|

so we have |

|

|

|

|

|

|

|

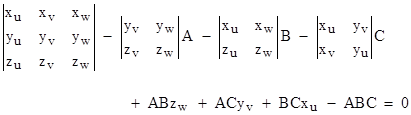

The vanishing of the determinant gives the condition |

|

|

|

|

|

|

|

Thus for any direction corresponding to values of A,B,C such that terms of first and second degree in this equation vanish, the value of ABC equals the Jacobian, just as we expect. The same result applies for any number of variables. |

|

|

|

In the case of three variables the direction has two degrees of freedom but is subject to only one constraint, so there are infinitely many directions. However, if we take the vanishing of the first degree terms and of the second degree terms as two separate conditions, there is only a finite set of suitable directions. Letting Dxyz, Dyz, Dxz, and Dxy denote the determinants appearing in the above equation, we can impose the three conditions |

|

|

|

|

|

|

|

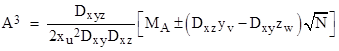

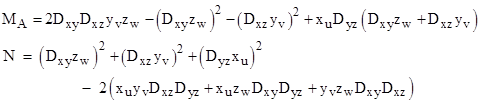

Solving these equations for A, B, and C, we get |

|

|

|

|

|

|

|

where |

|

|

|

|

|

The expressions for B3 and C3 are given by making the appropriate permutations. |

|

|

|

Incidentally, it might seem as if the factor corresponding to a change of variables would be self-evident geometrically, and indeed it is fairly direct (especially in particular cases), but to deduce the general formula, even in just two dimensions, is not completely trivial. To illustrate, consider a parallelogram on the two-dimensional as shown in the figure below. |

|

|

|

|

|

|

|

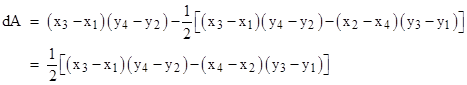

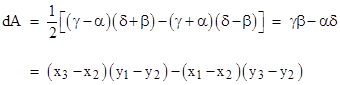

The intervals from u1 to u2 and v1 to v2 correspond to differentials du and dv respectively, and the region inside the paralellogram bounded by these values represents the differential area dA. To express dA as some multiple of (du)(dv), we first note that the area inside the bounded region equals the area of the circumscribing rectangle minus the areas of the four excluded triangular regions. Each of those triangular regions is half of a rectangle, and those four rectangles precisely cover the circumscribing rectangle, except that the inner-most interior rectangle is covered twice. Thus we can immediately express the area as |

|

|

|

|

|

|

|

If the u and v coordinates were configured differently there could be a region of overlap in the center instead of an uncovered region, so we would add instead of subtracting that region, but in that case the order of the corresponding indexed coordinates would be reversed, which also has the effect of reversing the sign of the last term. Hence the net result is the same. Now, notice that if we put |

|

|

|

|

|

|

|

then the area can be written as |

|

|

|

|

|

|

|

Now, the partial derivatives can be written as |

|

|

|

|

|

|

|

so the area can be expressed as |

|

|

|

|

|

|

|

The laboriousness of this derivation shows the usefulness of the technique discussed previously. |

|

|